- Virgin Media Community

- Forum Archive

- Is DOCSIS-PIE enabled per specification on VM?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Is DOCSIS-PIE enabled per specification on VM?

- Mark as New

- Bookmark this message

- Subscribe to this message

- Mute

- Subscribe to this message's RSS feed

- Highlight this message

- Print this message

- Flag for a moderator

07-11-2021 20:16 - edited 07-11-2021 20:25

Having suffered with poor upstream latency under load (aka 'bufferbloat') for years, as have VM's other customers, I was interested to read a paper[1] today by Flickinger et al.

The paper refers to a newer upstream active queue management, Proportional Integral Controller Enhanced - known colloquially as DOCSIS-PIE. More specifically, the paper discusses the impact of implementing DOCSIS-PIE on a (Comcast) DOCSIS network during the COVID-19 pandemic, when everyone started working and schooling from home, thus adding unprecedented latency and load to networks.

A rollout by Comcast with two modems, one with AQM/PIE enabled and one without, showed a difference in upstream latency under load to the order of 15ms (with PIE) vs 250ms (without PIE). A rather staggering difference! I certainly don't see behaviour as good as that on VM, hence the thread/question.

More importantly, the paper goes on to cite that:

In 2014, CableLabs included PIE as the official AQM in the DOCSIS 3.1 specifications as a mandatory feature for the CMTS and cable modems (PIE was also added as an optional feature for earlier generation DOCSIS 3.0 cable modems). At the same time, CableLabs required that DOCSIS 3.1 CMTS equipment support AQM as well but left the algorithm choice to the vendor.

Given it's mandatory in the standard/specification, I'm assuming this is something that VM has enabled for the downstream DOCSIS 3.1 channel on Gig1? What of the other DOCSIS 3 channels, which are optional?

(Edit: I'm asking about the CMTS and the CPE here.)

There's scant information out there on the VM network core, and this is the only place I know of to ask such a question despite the fact the forum is clearly not geared to this type of discussion (regrettably).

I'd be interested to hear a definitive reply back from someone at VM, and I do understand that the forum team will likely need to bounce this over to someone at networks for comment.

It'd be nice to finally have a connection that doesn't collapse into a crippled mess of high latency because someone's phone is backing up to iCloud, when I'm trying to (for example) make a video call or waiting for elements to render on a website. As it is, currently I'm just implementing fq_codel on my OpenBSD router (and running cake+bbr on my *nix boxes). But what of VM's end?

[1] Improving Latency with Active Queue Management (AQM) During COVID-19

By Allen Flickinger, Carl Klatsky, Atahualpa Ledesma, Jason Livingood, Sebnem Ozer

https://arxiv.org/ftp/arxiv/papers/2107/2107.13968.pdf

- Mark as New

- Bookmark this message

- Subscribe to this message

- Mute

- Subscribe to this message's RSS feed

- Highlight this message

- Print this message

- Flag for a moderator

on 07-11-2021 21:45

I suspect you will never get a response from the forum staff as, as you rightly say, they would need to refer this back to the network team and VM are notoriously reticent (possibly for good reason) very loath to give any information about how the backend systems are actually configured.

However you partially answered the question yourself, the major cause of latency is the upstream using TDMA and suffers badly if the circuits are over utilised. None of VM’s internet tiers utilise DOCSIS 3.1 on the upstream, they are still on 3.0, and as you say it’s not obligatory to support AQM.

TLDR : the short answer to your question is (almost) certainly NO.

Incidentally, the specs say that DOCSIS 3.1 has to support various flavours of AQM, it doesn’t mandate that they actually be implemented!

- Mark as New

- Bookmark this message

- Subscribe to this message

- Mute

- Subscribe to this message's RSS feed

- Highlight this message

- Print this message

- Flag for a moderator

on 07-11-2021 22:26

In my view all this mucking about with packet priorities is simple smoke and mirrors.

If you don't have adequate bandwidth then something will give.

Placing bandwidth contention right down at the sharp end, as DOCSIS networks do, is likely to result in spurious latency spikes as the available carousel slots get exhausted. Prioritisation of certain protocols just means others have to wait.

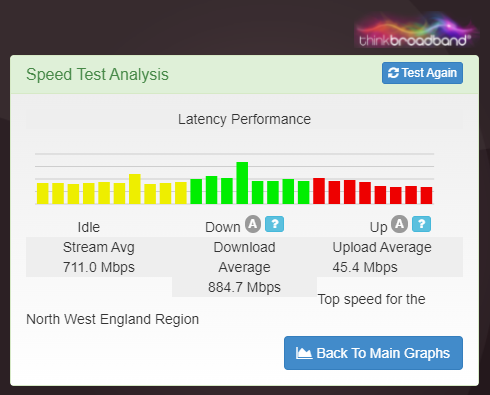

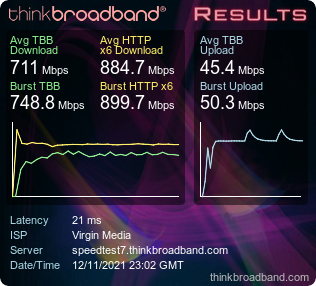

In that regard, I have long believed that ICMP-ECHO packets have been prioritised by VM on certain CMTS equipments to improve the perceived latency (such as measured by ThinkBroadband BQM graphs) when subject to significant local load.

The worry is that these 'fudges' will prolong the life of an antiquated and outdated infrastructure when investment should really be directed at step change.

- Mark as New

- Bookmark this message

- Subscribe to this message

- Mute

- Subscribe to this message's RSS feed

- Highlight this message

- Print this message

- Flag for a moderator

on 08-11-2021 02:02

@Eeeps wrote:Prioritisation of certain protocols just means others have to wait.

DOCSIS-pie is a mandatory part of the DOCSIS3.1 specification, but it seems VM hasn't implemented it, hence my asking. I may have misunderstood your intention but cake/fq_codel etc don't prioritise particular protocols. It's not old school QoS (put voice first, drop http until there's nobody more important waiting in the queue). It's about optimising the network stack, NIC drivers and OS code to mix and fairly queue packets and distribute them out of the local buffer and onwards without everything choking to a freeze, causing congestion and latency under load. I'm no expert, but a lot of work has been done by people like Dave Täht et al. on this subject and the research is very clear on its benefits. Did you read the paper I linked? It's very interesting. Also, for everyone generally, there's always good info on Bufferbloat.Net. 🙂

- Mark as New

- Bookmark this message

- Subscribe to this message

- Mute

- Subscribe to this message's RSS feed

- Highlight this message

- Print this message

- Flag for a moderator

on 08-11-2021 07:28

@Eeeps The worry is that these 'fudges' will prolong the life of an antiquated and outdated infrastructure when investment should really be directed at step change.

Given that VM have publicly stated that DOCSIS will be dead and gone by 2028, replaced by XGSPON, they'll need to start serious conversion (including CPE and local network equipment) pretty soon after the D3.1 roll out is full completed, which should be a done in a matter of months. I'd imagine they're hoping to do the easy stuff like the earliest Project Lightning areas, moving RFoG to XGSPON first and perhaps use contractor capacity that becomes available as the Openreach FTTP programme slows down around 2025-6 to relay the HFC as a full fibre, but that's cutting it very close indeed if they expect to stick to the 2028 date.

All of which means that VM should be thinking very carefully before investing anything additional in DOCSIS, and there will at some stage be a stop date for DOCSIS which may have unfortunate consequences for customers subject to over-utilisation. Of course, with Openreach FTTP and Alnet growth, existing "high speed captive customers" will possibly both have alternative high speed options, and the inevitable VM customer attrition will see a few areas move back within available capacity.

All of which suggests to me that VM latency performance and congestion problems are generally going to stagnate for a few years. I'll be delighted to be proven wrong.

- Mark as New

- Bookmark this message

- Subscribe to this message

- Mute

- Subscribe to this message's RSS feed

- Highlight this message

- Print this message

- Flag for a moderator

on 14-11-2021 16:05

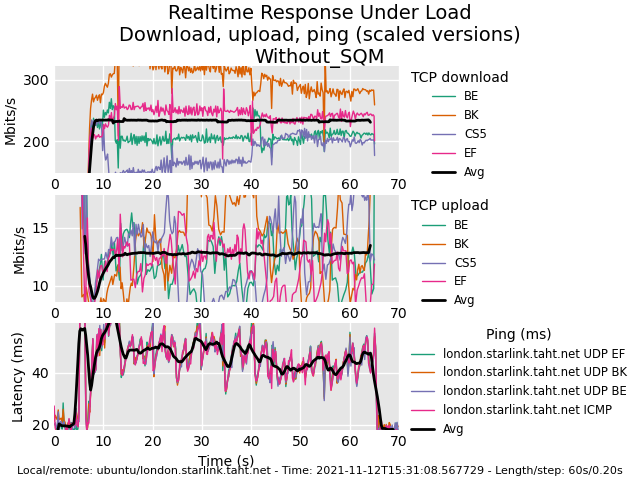

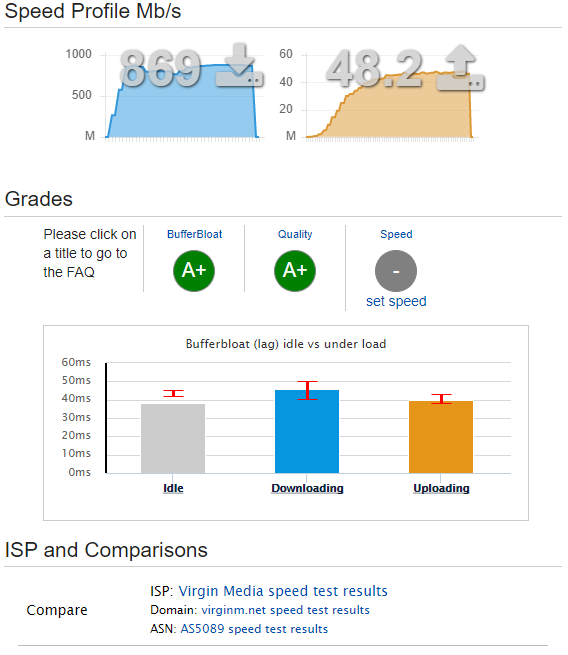

Just a little update for the few who may care. I have been testing the line with the help of one of the Internet's true engineers, Dave Taht. Using flent (specifically the tortuous rrul test) to measure latency, I have gone from a baseline (out of the box, default VM) of:

which, to be fair, isn't horrific compared to what I've seen in the past. I did see a lot of issues with bloat though, for example if someone was uploading or downloading something, real time video streams (think those little autoplay videos on Reddit et al.) would just crap out and drop to 240p or stop playing. It was getting a D rating for bufferbloat on DSLReports and Waveform etc.

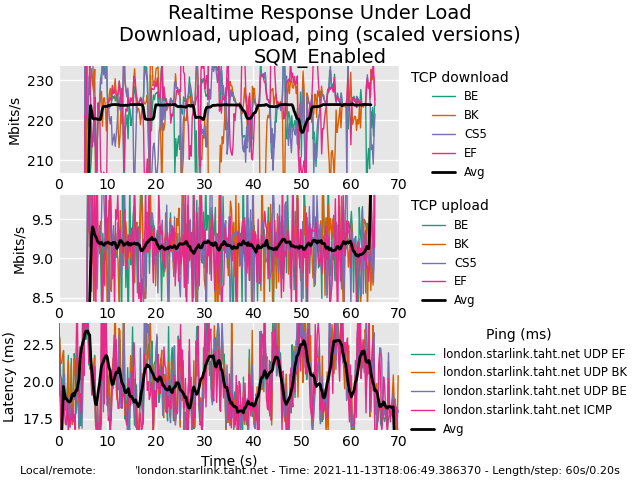

After some tweaking using SQM on OpenWrt, and setting the 'docsis' option in the config, we see a much nicer and more uniform experience:

As you can see, latency under load has gone from spiking >50ms to hovering nicely and neatly around 20ms. There's also better mixing/sharing across the threads. As I said, the rrul test is tortuous and emulates torrenting plus multiple up and downstream threads plus heavy acks. This is a very nice result! Now Alice and Bob can be fully utilising the upstream and downstream (torrents, http downloads, whatever) on multiple devices, and Dave can still browse with instant snappy responses and load video on demand (or video calling, or whatever) at full HD with no issues. This really ought to be implemented upstream, as most customers won't have the first idea on how to fix this. It's a simple and free fix, as fq_codel, cake, pie and the rest are all FOSS... So why aren't VM on the case? Maybe it takes more customers to notice why their lines feel rubbish, and to start kicking up a fuss.

- Mark as New

- Bookmark this message

- Subscribe to this message

- Mute

- Subscribe to this message's RSS feed

- Highlight this message

- Print this message

- Flag for a moderator

on 14-11-2021 17:17

@RainmakerRaw wrote:This really ought to be implemented upstream, as most customers won't have the first idea on how to fix this. It's a simple and free fix, as fq_codel, cake, pie and the rest are all FOSS... So why aren't VM on the case? Maybe it takes more customers to notice why their lines feel rubbish, and to start kicking up a fuss.

Tell me, what do you think would happen to the connection if everyone on the same segment implemented the same ‘fixes’ as you did?

- Mark as New

- Bookmark this message

- Subscribe to this message

- Mute

- Subscribe to this message's RSS feed

- Highlight this message

- Print this message

- Flag for a moderator

on 14-11-2021 17:31

Surely it wouldn't need everyone, because of the high contention ratios VM build to. I'd have thought a relatively modest number of people doing this would bring the network to its knees. Isn't this broadly what happened at the beginning of the year when so the number of home workers using Teams swamped the upstream on many network segments despite modest individual bandwidth needs, and services in many areas ground to a halt?

- Mark as New

- Bookmark this message

- Subscribe to this message

- Mute

- Subscribe to this message's RSS feed

- Highlight this message

- Print this message

- Flag for a moderator

on 14-11-2021 17:37

...not to mention that I'd see the proposed activity as clearly in breach of clause G10 of the Terms & Conditions, probably in the same class as introducing noise to the network. VM's customary response is disconnection of services.

- Mark as New

- Bookmark this message

- Subscribe to this message

- Mute

- Subscribe to this message's RSS feed

- Highlight this message

- Print this message

- Flag for a moderator

on 14-11-2021 18:05

You guys are aware that OP is talking about contention on his own service, as in someone else in the household using all his allowed upstream bandwidth on a bulk transfer and drowning out real-time or interactive traffic, right?

What he's implemented will do nothing to impact VM's network.

The load testing is no different from those who obsessively run automated speed tests.

If a segment is congested AQM isn't going to help, you need LLD and then more capacity per modem, whether by reducing the modems on the segment or increasing the capacity.