- Virgin Media Community

- Forum Archive

- Re: Hub3, nonstop broadband dropouts since 30 Jan

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Hub3, nonstop broadband dropouts since 30 Jan

- Mark as New

- Bookmark this message

- Subscribe to this message

- Mute

- Subscribe to this message's RSS feed

- Highlight this message

- Print this message

- Flag for a moderator

on 07-02-2023 15:36

Hi all,

since 30 Jan, on Hub 3, 250Mbs package, no wiring or any other changes (after having had perfect connection for ages):

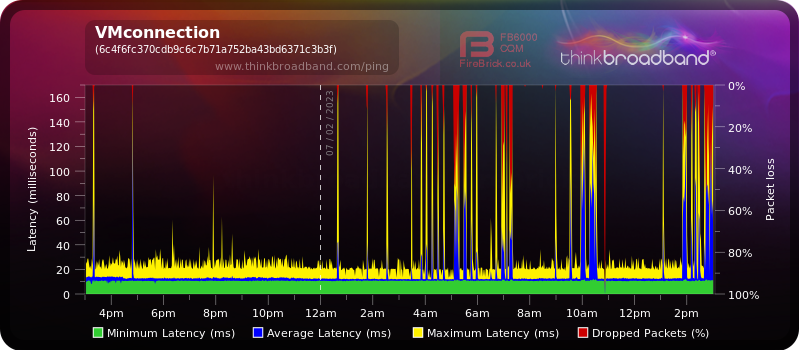

- broadband connection comes & goes, some periods are bareable, but often it dies every literally every other minute. I have set up a BQM and recent pic is below.

- Hub3 every time logs what I exemplify below as recent logs;

I have logged a fault, I was treated to "improving services" message after that - and this morning SMS about everything being fine / fixed. It isn't, as illustrated below. Phone support has been surprisingly unhelpful, they read off the notes that some work in the area fixed all issues.

I just don't know what to do? please it has become unusable - this message was typed during 4 attempts.

Network Log

Time Priority Description

| 07/02/2023 14:47:54 | critical | SYNC Timing Synchronization failure - Loss of Sync;CM-MAC=**:**:**:**:**:**;CMTS-MAC=**:**:**:**:**:**;CM-QOS=1.1;CM-VER=3.0; |

| 07/02/2023 14:47:54 | Warning! | RCS Partial Service;CM-MAC=**:**:**:**:**:**;CMTS-MAC=**:**:**:**:**:**;CM-QOS=1.1;CM-VER=3.0; |

| 07/02/2023 14:47:54 | critical | SYNC Timing Synchronization failure - Loss of Sync;CM-MAC=**:**:**:**:**:**;CMTS-MAC=**:**:**:**:**:**;CM-QOS=1.1;CM-VER=3.0; |

| 07/02/2023 14:47:53 | Warning! | RCS Partial Service;CM-MAC=**:**:**:**:**:**;CMTS-MAC=**:**:**:**:**:**;CM-QOS=1.1;CM-VER=3.0; |

| 07/02/2023 14:47:20 | notice | LAN login Success;CM-MAC=**:**:**:**:**:**;CMTS-MAC=**:**:**:**:**:**;CM-QOS=1.1;CM-VER=3.0; |

| 07/02/2023 14:46:32 | Warning! | RCS Partial Service;CM-MAC=**:**:**:**:**:**;CMTS-MAC=**:**:**:**:**:**;CM-QOS=1.1;CM-VER=3.0; |

| 07/02/2023 14:46:32 | critical | SYNC Timing Synchronization failure - Loss of Sync;CM-MAC=**:**:**:**:**:**;CMTS-MAC=**:**:**:**:**:**;CM-QOS=1.1;CM-VER=3.0; |

| 07/02/2023 14:46:31 | Warning! | RCS Partial Service;CM-MAC=**:**:**:**:**:**;CMTS-MAC=**:**:**:**:**:**;CM-QOS=1.1;CM-VER=3.0; |

| 07/02/2023 14:46:31 | critical | SYNC Timing Synchronization failure - Loss of Sync;CM-MAC=**:**:**:**:**:**;CMTS-MAC=**:**:**:**:**:**;CM-QOS=1.1;CM-VER=3.0; |

| 07/02/2023 14:45:13 | critical | No Ranging Response received - T3 time-out;CM-MAC=**:**:**:**:**:**;CMTS-MAC=**:**:**:**:**:**;CM-QOS=1.1;CM-VER=3.0; |

| 07/02/2023 14:45:9 | critical | SYNC Timing Synchronization failure - Loss of Sync;CM-MAC=**:**:**:**:**:**;CMTS-MAC=**:**:**:**:**:**;CM-QOS=1.1;CM-VER=3.0; |

| 07/02/2023 14:45:9 | Warning! | RCS Partial Service;CM-MAC=**:**:**:**:**:**;CMTS-MAC=**:**:**:**:**:**;CM-QOS=1.1;CM-VER=3.0; |

| 07/02/2023 14:45:9 | critical | SYNC Timing Synchronization failure - Loss of Sync;CM-MAC=**:**:**:**:**:**;CMTS-MAC=**:**:**:**:**:**;CM-QOS=1.1;CM-VER=3.0; |

| 07/02/2023 14:43:47 | Warning! | RCS Partial Service;CM-MAC=**:**:**:**:**:**;CMTS-MAC=**:**:**:**:**:**;CM-QOS=1.1;CM-VER=3.0; |

| 07/02/2023 14:43:47 | critical | SYNC Timing Synchronization failure - Loss of Sync;CM-MAC=**:**:**:**:**:**;CMTS-MAC=**:**:**:**:**:**;CM-QOS=1.1;CM-VER=3.0; |

| 07/02/2023 14:43:47 | Warning! | RCS Partial Service;CM-MAC=**:**:**:**:**:**;CMTS-MAC=**:**:**:**:**:**;CM-QOS=1.1;CM-VER=3.0; |

| 07/02/2023 14:43:46 | critical | SYNC Timing Synchronization failure - Loss of Sync;CM-MAC=**:**:**:**:**:**;CMTS-MAC=**:**:**:**:**:**;CM-QOS=1.1;CM-VER=3.0; |

| 07/02/2023 14:42:28 | Warning! | Lost MDD Timeout;CM-MAC=**:**:**:**:**:**;CMTS-MAC=**:**:**:**:**:**;CM-QOS=1.1;CM-VER=3.0; |

| 07/02/2023 14:42:25 | Warning! | RCS Partial Service;CM-MAC=**:**:**:**:**:**;CMTS-MAC=**:**:**:**:**:**;CM-QOS=1.1;CM-VER=3.0; |

| 07/02/2023 14:42:25 | critical | SYNC Timing Synchronization failure - Loss of Sync;CM-MAC=**:**:**:**:**:**;CMTS-MAC=**:**:**:**:**:**;CM-QOS=1.1;CM-VER=3.0; |

- Mark as New

- Bookmark this message

- Subscribe to this message

- Mute

- Subscribe to this message's RSS feed

- Highlight this message

- Print this message

- Flag for a moderator

on 15-02-2023 08:22

Unfortunately, no end in sight.

Today the yet again moved "fix" estimate expires at 9AM, and somehow, based on the described weeks and weeks of mutually contradicting VM status nonsense, clueless out of hours "support team", several times "fixed" then reopened area issues, I am betting that it will not be fixed this time either.

Everybody is very "sorry", but after weeks of this, so far nobody offered e.g. backup solution via a mobile network dongle (not even after having been for 23 years an NTL & VM customer), nobody (with exception of one single person) who promised to call back with actual status called me back even.

Calling fault line for "update from our team" ends up with people who have nothing to do with tech faults, understand ZERO of the elementary details I have to tell them (instead of this being in reverse), and yesterday, for the 3rd time in 2 weeks, again a clueless person wanted to send a technician to my home - which, as several times shown, would get auto-cancelled by their own system due to an area fault... and they still cannot understand the difference.

If I had as many bytes as the number of times I heard "sorry" with not even the remotest semblance of anybody DOING something to help, I'd have internet service...

- Mark as New

- Bookmark this message

- Subscribe to this message

- Mute

- Subscribe to this message's RSS feed

- Highlight this message

- Print this message

- Flag for a moderator

on 16-02-2023 10:10

Hey there @disrupted, I'm just going to update the public thread incase anyone else from the same area is experiencing this issue.

The SNR fault (signal to noise ratio) fault has been delayed for it's repair.

This has been advised by the engineers as they require more time to get the fault fixed.

The reference for anyone who is having the same issue is as follows: F010473215.

The new estimated repair time and date: 9am on the 22nd February 2023

We apologise for any inconveniences causes.

Kind regards,

Ilyas.

- Mark as New

- Bookmark this message

- Subscribe to this message

- Mute

- Subscribe to this message's RSS feed

- Highlight this message

- Print this message

- Flag for a moderator

on 16-02-2023 12:24

Thank you, possibly some light at the end of the very, very long tunnel.

My non-technical, very serious issue is how can it take this many weeks for a customer to get some actual status information instead of, as documented and captured, random and mutually self-contradicting nonsense via VM's own systems & support staff.

This is aside from the actual effort to fix this - I captured the so far twice arbitrarily issued "fix" notifications about the area fault that persisted during and after. It shows that either zero effort was made, and some just like to increase their metrics of "fixed" tickets whilst I have verifiable proof of no actual change whatsoever in the state of the unusable broadband connection... or the testing was not done at all over a time period (often just 1 minute!) that would have shown the problem's continued presence.

Either way, it has revealed multiple fundamental and systemic failures in elementary fault & communication management in VM - at a level that is, in my entire and considerable professional experience, is simply breathtaking. Either shocking incompetence or intentional misinformation is in play, via totally shambolic and proven to be chaotically self-contradicting IT systems within VM.

These will be taken up by forums outside VM, but from VM I shall fully expect adequate compensation for this many weeks of persistent loss of connection, plus the shocking level of misinformation, incompetence, and deliberate ignorance - all of which have been amply recorded and will be put to use.

- Mark as New

- Bookmark this message

- Subscribe to this message

- Mute

- Subscribe to this message's RSS feed

- Highlight this message

- Print this message

- Flag for a moderator

on 16-02-2023 14:35

Thank you for speaking with us @disrupted today on the forums page.

I'm glad to hear that the fault has been cleared by the engineers and the network team.

As advised - watch out for the next two weeks or so ensure everything is okay.

Other than that - feel free to reach out to us for anything and we will assist 🙂

Kind regards,

Ilyas.

- « Previous

-

- 1

- 2

- Next »

- Diagnosing problem - Hub 3 in Forum Archive

- Low SNR on some downstream channels in Forum Archive

- Superhub 3 - Turn off Intelligent Wi-Fi& Split SSID’s in Forum Archive

- Intermittent Network Dropouts - SYNC Timing Synchronization failure - Loss of Sync in Forum Archive

- Broadband connection consistently poor in Forum Archive